Conventional tools for visual analytics emphasize a linear production workflow and lack organic "work surfaces." A better surface would simultaneously support collaborative visualization construction, data and design exploration, and reasoning. To facilitate data-driven design within existing design tools such as card sorting, we introduce Composites, a tangible, augmented reality interface for constructing visualizations on large surfaces. In response to the placement of physical sticky-notes, Composites projects visualizations and data onto large surfaces. Our spatial grammar allows the designer to flexibly construct visualizations through the use of the notes. Similar to affinity-diagramming, the designer can "connect" the physical notes to data, operations, and visualizations which can then be re-arranged based on creative needs. We develop mechanisms (sticky interactions, visual hinting, etc.) to provide guiding feedback to the end-user. By leveraging low-cost technology, Composites extends a working surface to support a broad range of workflows without limiting creative design thinking.

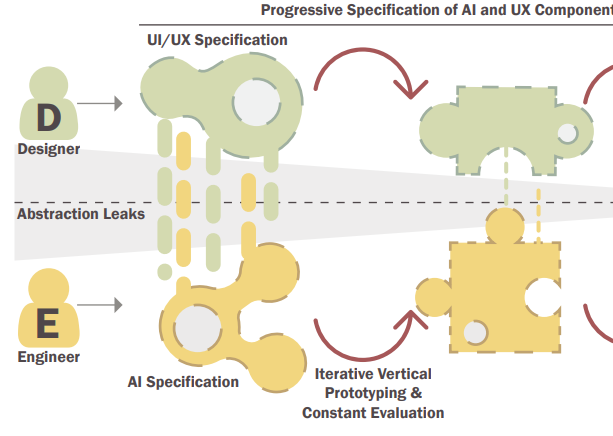

In conventional software development, user experience designers and engineers collaborate through separation of concerns (SoC): designers create human interface specifications, and engineers build to those specifications. However, we argue that HAI systems thwart SoC because human needs must shape the design of the AI interface and the underlying AI sub-components and training data. How do designers and engineers currently collaborate on AI and UX design? To find out, we interviewed 21 HAI professionals (UX researchers, AI engineers, data scientists, and managers) across 14 organizations about their collaborative work practices and associated challenges. We find that hidden information encapsulated by SoC challenges collaboration across design and engineering concerns. Practitioners describe inventing leaky abstractions---ad-hoc representations exposing low-level design and implementation details---to "puncture" SoC and share information across expertise boundaries. We identify how leaky abstractions are employed to collaborate at the AI-UX boundary and formalize the process of creating and using leaky abstractions.

Video is an effective medium for knowledge communication and learning. Yet active viewing and note-taking from videos remain a challenge. Specifically, during note-taking, viewers find it difficult to extract essential information such as representation, composition, motion, and interactions of graphical objects and narration. Current approaches rely on creating static screenshots, manual clipping, and manual annotation/transcription. Additionally, note-takers may need to repeatedly pause and rewind the video, disrupting their active viewing process. We propose VideoSticker, a tool designed to support visual note-taking by extracting expressive content from videos as 'motion stickers'. VideoSticker implements automated object detection and tracking, linking objects to the transcript, and rapid extraction of stickers across space, time, and events of interest. VideoSticker's two-pass approach allows viewers to capture high-level information uninterrupted and later extract specific details. We demonstrate the usability of VideoSticker for a variety of videos and note-taking needs.

Various tools and practices have been developed to support practitioners in identifying, assessing, and mitigating fairness-related harms caused by AI systems. However, prior research has highlighted gaps between the intended design of these tools and practices and their use within particular contexts, including gaps caused by the role that organizational factors play in shaping fairness work. In this paper, we investigate these gaps for one such practice: disaggregated evaluations of AI systems, intended to uncover performance disparities between demographic groups. By conducting semi-structured interviews and structured workshops with thirty-three AI practitioners from ten teams at three technology companies, we identify practitioners' processes, challenges, and needs for support when designing disaggregated evaluations. We find that practitioners face challenges when choosing performance metrics, identifying the most relevant direct stakeholders and demographic groups on which to focus, and collecting datasets with which to conduct disaggregated evaluations. More generally, we identify impacts on fairness work stemming from a lack of engagement with direct stakeholders, business imperatives that prioritize customers over marginalized groups, and the drive to deploy AI systems at scale.

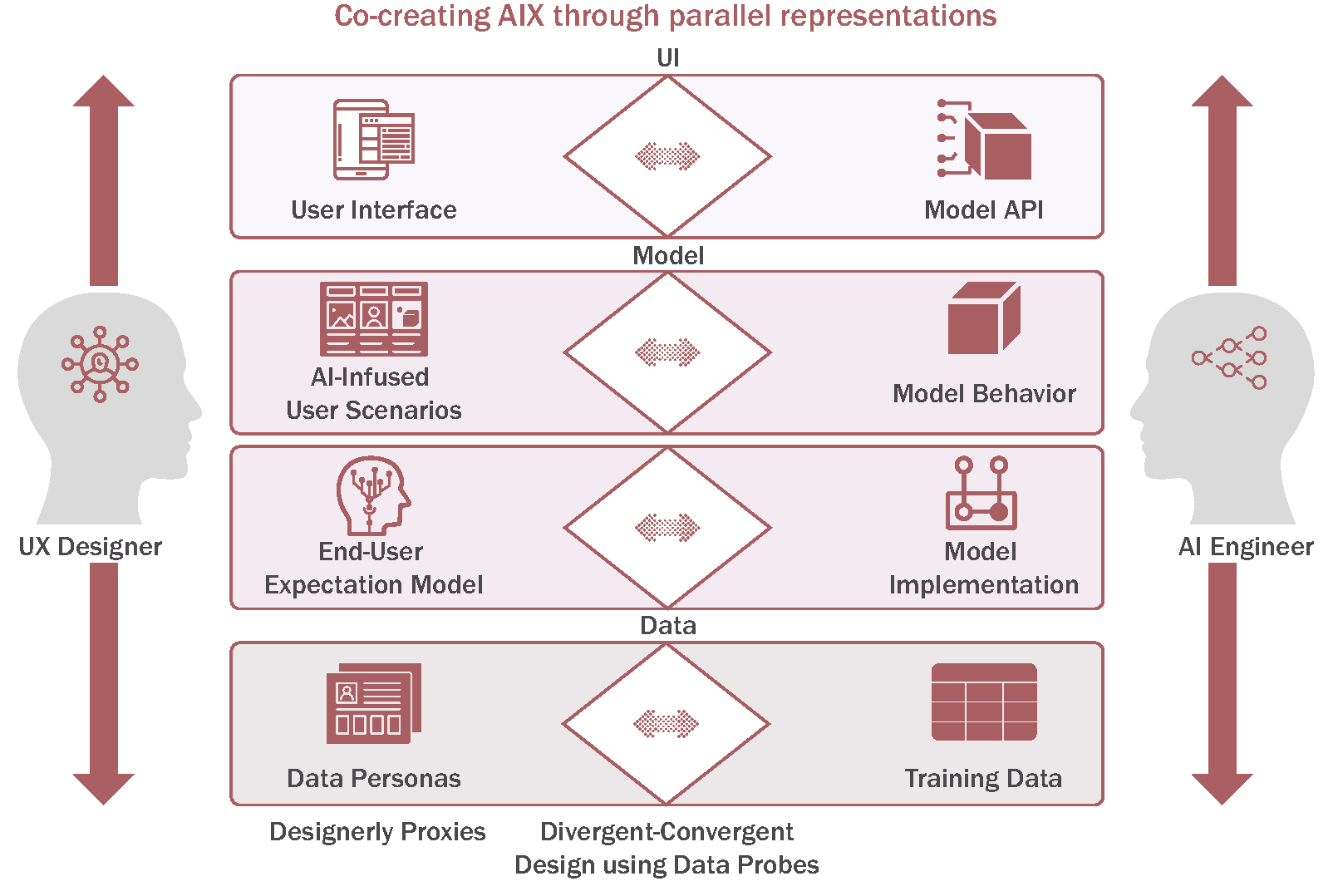

Thinking of technology as a design material is appealing. It encourages designers to explore the material's properties to understand its capabilities and limitations--a prerequisite to generative design thinking. However, as a material, AI resists this approach because its properties only emerge as part of the user experience design. Therefore, designers and AI engineers must collaborate in new ways to create both the material and its application experience. We investigate the co-creation process through a design study with 10 pairs of designers and engineers. We find that design 'probes' with user data are a useful tool in defining AI materials. Through data probes, designers construct designerly representations of the envisioned AI experience (AIX) to identify desirable AI characteristics. Data probes facilitate divergent design thinking, material testing, and design validation. Based on our findings, we propose a process model for co-creating AIX and offer design considerations for incorporating data probes in AIX design tools.

Best Paper Award

When prototyping AI experiences (AIX), interface designers seek useful and usable ways to support end-user tasks through AI capabilities. However, AI poses challenges to design due to its dynamic behavior in response to training data, end-user data, and feedback. Designers must consider AI's uncertainties and offer adaptations such as explainability, error recovery, and automation vs. human task control. Unfortunately, current prototyping tools assume a black-box view of AI, forcing designers to work with separate tools to explore machine learning models, understand model performance, and align interface choices with model behavior. This introduces friction to rapid and iterative prototyping. We propose Model-Informed Prototyping (MIP), a workflow for AIX design that combines model exploration with UI prototyping tasks. Our system, ProtoAI, allows designers to directly incorporate model outputs into interface designs, evaluate design choices across different inputs, and iteratively revise designs by analyzing model breakdowns. We demonstrate how ProtoAI can readily operationalize human-AI design guidelines. Our user study finds that designers can effectively engage in MIP to create and evaluate AI-powered interfaces during AIX design.

Best Paper Award

Learning from text is a constructive activity in which sentence-level information is combined by the reader to build coherent mental models. With increasingly complex texts, forming a mental model becomes challenging due to a lack of background knowledge, and limits in working memory and attention. To address this, we are taught knowledge externalization strategies such as active reading and diagramming. Unfortunately, paper-and-pencil approaches may not always be appropriate, and software solutions create friction through difficult input modalities, limited workflow support, and barriers between reading and diagramming. For all but the simplest text, building coherent diagrams can be tedious and difficult. We propose Active Diagramming, an approach extending familiar active reading strategies to the task of diagram construction. Our prototype, texSketch, combines pen-and-ink interactions with natural language processing to reduce the cost of producing diagrams while maintaining the cognitive effort necessary for comprehension. Our user study finds that readers can effectively create diagrams without disrupting reading.

People often use text in their drawings to communicate their ideas. For visually impaired people, adding textual information to tactile graphics is challenging. Labeling in braille is a laborious process and clutters the drawings. Audio labels provide an alternative way to add text. However, digital drawing tools for visually impaired people have not examined the use of audio for creating labels. We conducted a study comprising three tasks with 11 visually impaired adults. Our goal was to understand how participants explored and created labeled tactile graphics (both braille and audio), and their interaction preferences. We find that audio labels were quicker to use and easier to create. However, braille labels enabled flexible exploration strategies. We also find that participants preferred multimodal interaction commands, and report hand postures and movements observed during the drawing process for designing recognizable interactions. Based on our findings, we derive design implications for digital drawing tools.

Best Paper Award

Despite the availability of software to support Affinity Diagramming (AD), practitioners still largely favor physical sticky-notes. Physical notes are easy to set-up, can be moved around in space and offer flexibility when clustering unstructured data. However, when working with mixed data sources such as surveys, designers often trade off the physicality of notes for analytical power. We propose Affinity Lens, a mobile-based augmented reality (AR) application for Data-Assisted Affinity Diagramming (DAAD). Our application provides just-in-time quantitative insights overlaid on physical notes. Affinity Lens uses several different types of AR overlays (called lenses) to help users find specific notes, cluster information, and summarize insights from clusters. Through a formative study of AD users, we developed design principles for data-assisted AD and an initial collection of lenses. Based on our prototype, we find that Affinity Lens supports easy switching between qualitative and quantitative 'views' of data, without surrendering the lightweight benefits of existing AD practice.

Details-on-demand is a crucial feature in the visual information-seeking process but is often only implemented in highly constrained settings. The most common solution, hover queries (i.e., tooltips), are fast and expressive but are usually limited to single mark (e.g., a bar in a bar chart). 'Queries' to retrieve details for more complex sets of objects (e.g., comparisons between pairs of elements, averages across multiple items, trend lines, etc.) are difficult for end-users to invoke explicitly. Further, the output of these queries require complex annotations and overlays which need to be displayed and dismissed on demand to avoid clutter. In this work we introduce SmartCues, a library to support details-on-demand through dynamically computed overlays. For end-users, SmartCues provides multitouch interactions to construct complex queries for a variety of details. For designers, SmartCues offers an interaction library that can be used out-of-the-box, and can be extended for new charts and detail types. We demonstrate how SmartCues can be implemented across a wide array of visualization types and, through a lab study, show that end users can effectively use SmartCues.

Performance animation is an expressive method for animating characters through human performance. However, character motion is only one part of creating animated stories. The typical workflow also involves writing a script, coordinating actors, and editing recorded performances. In most cases, these steps are done in isolation with separate tools, which introduces friction and hinders iteration. We propose TakeToons, a script-driven approach that allows authors to annotate standard scripts with relevant animation events like character actions, camera positions, and scene backgrounds. We compile this script into a story model that persists throughout the production process and provides a consistent structure for organizing and assembling recorded performances and propagating script or timing edits to existing recordings. TakeToons enables writing, performing and editing to happen in an integrated and interleaved manner that streamlines production and facilitates iteration. Informal feedback from professional animators suggests that our approach can benefit many existing workflows supporting individual authors and production teams with many different contributors.

Studies on technology adoption typically assume that a user's perception of usability and usefulness of technology are central to its adoption. Specifically, in the case of accessibility and assistive technology, research has traditionally focused on the artifact rather than the individual, arguing that individual technologies fail or succeed based on their usability and fit for their users. Using a mixed-methods field study of smartphone adoption by 81 people with visual impairments in Bangalore, India, we argue that these positions are dated in the case of accessibility where a non-homogeneous population must adapt to technologies built for sighted people. We found that many users switch to smartphones despite their awareness of significant usability challenges with smartphones. We propose a nuanced understanding of perceived usefulness and actual usage based on need-related social and economic functions, which is an important step toward rethinking technology adoption for people with disabilities.

The focus of intelligent systems is on "making things easy" through automation. However, for many cognitive tasks-- such as learning, creativity, or sensemaking--there is such a thing as too easy or too automated. Current human-AI design principles, as well as general usability guidelines, prioritize automation, and efficient task execution over human effort. However, this type of advice may not be suitable for designing systems that need to balance automation with other cognitive goals. In these cases, designers lack the necessary tools that will allow them to consider the trade-offs between automation, AI assistance, and human-effort. My dissertation looks at using models from cognitive psychology to inform the design of intelligent systems. The first system, Florum, looks at automation after human-effort as a strategy to facilitate learning from science text. The second system, TakeToons, explores automation as a complementary strategy to human-effort to support creative animation tasks. A third set, SmartCues and Affinity Lens use AI as a last-mile optimization strategy for human sensemaking tasks. Based on these systems, I am looking to develop a design framework that (1) classifies threats across different levels of design including automation, user interface, expectations from AI, and cognition and (2) offers ways to validate design decisions.

Shared decision-making is a process that requires active participation from the patient in making treatment related decisions [5]. Through this process, both patients and clinicians develop a shared understanding about the patients' lifestyle choices and how they affect symptoms to make informed treatment related decisions. However, there are communication and process barriers to developing this understanding, including lack of medical knowledge on the part of the patients and lack of standard processes for clinicians to follow. With Data Dialog, we propose a data-driven approach to information exchange between patients and clinicians, using visualizations as' boundary objects' for communication and collaboration. We outline a number of scenarios in which Data Dialog can be useful, and discuss opportunities and challenges that need to be addressed.

Chinese paintings are deeply rooted in cultural context. The problem is that, outside of Chinese culture, the uniqueness, the meaning and the value of these paintings is largely lost. Our design, titled" Rice Paper", helps bridge the cultural disconnect between the creators of traditional Chinese paintings, guohua, and non-Chinese viewers. It leverages an iPad application to facilitate the sharing of large quantities of artistic context for traditional Chinese paintings in the form of tangible, printed booklets, making the cultural context that breathes life into a Chinese painting more accessible to a wider audience.

Over the past few years, there has been a tremendous advancement in fitness tracking systems such as pedometers and heart rate monitors; and collecting and interacting with personal health or wellness data, is a growing research topic within the Human Computer Interaction (HCI) community. While fitness tracking systems have advanced into on-body sensing modalities, the process of data reporting and intervention is still largely done through mobile and web applications, using traditional methods of data visualization such as graphs and charts. This has resulted in a disconnect between data and its context. This work-in-progress," Magic Mirror", explores how to retrieve and visualize health data using the body as a reference frame.